Find out how HackerEarth can boost your tech recruiting

Learn more

Hackmotion, an app that helps you relieve stress

In every Hackathon, we witness people working around the clock to develop on an idea that’s unprecedented in all respects.

Hackmotion, an app developed during the Hackathon conducted by WACHacks in association with HackerEarth, is a breathtaking manifestation of the same.

Read on to know more about this app.

What is Hackmotion?

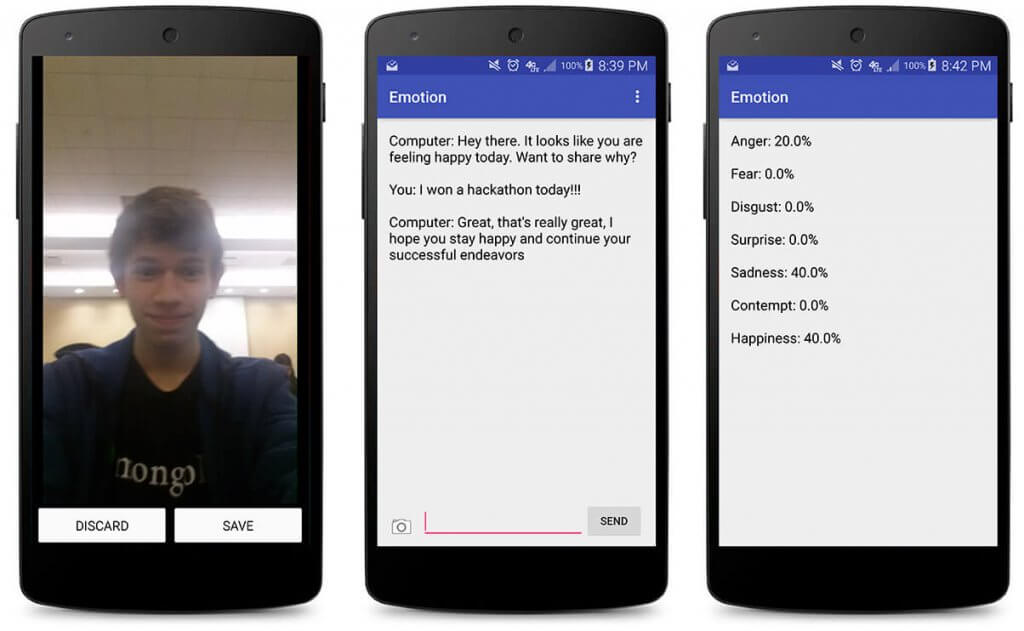

Hackmotion is an app that solves problems related to stress. It allows users to talk and have a friendly conversation with their phone. Along with this, it tracks users’ emotions and conversations in a journal format.

This app identifies the emotions that students commonly experience. It is designed to help them improve their social well-being, helping them be expressive through journaling.

Technologies/platforms/languages

- Android Studio: To develop and test the app.

- The Microsoft Face API: To analyze and detect faces in the picture taken

- Microsoft’s Emotion API: To determine what emotion the person in the picture is feeling

- Clarifai API: To process the image in the picture if there is no face

- Java: For internal logic

- XML: For layouts

Functionality

When the app is opened, the users are introduced to a friendly UI where they get an option to take a picture. Users can click on the camera icon to get the picture clicked. The phone processes this image using Microsoft Face API. Depending on the image, two types of APIs are then called:

- If there is a face in the image, Microsoft Emotions API is called. This API uses an algorithm which analyzes the face and determines what emotion the person is feeling. Once the emotion is recognised, the phone starts talking to the user according to his or her mood.

- If there isn’t any face in the image, Clarifai API is called. This API then determines what significant object is there in the picture. For example, if the user takes a picture of the leftover food, Clarifai will first recognise the food as an object and will then determine its type. For accuracy, questions related to the picture will be asked to the user.The conversation will start once the correct emotion is identified.

The app talks to the user in a way that they feel like they are talking to a real person. All the conversations and the objects detected by Clarifai get recorded in the journaling section of the app which the user can refer to in the future. Also, there is a statistics part of the app where the user can see the percentage of each emotion they have felt while downloading the app.

Challenges

Here are some of the challenges that the team faced while building this application:

- Making Microsoft and Face API’s to work in harmony

- Creating the algorithm that analyzes the face

- Making sure that Clarifai API is called when no face is detected

What’s Next?

Some of the future plans of the project creators Brian Cherin and Kaushik Prakash are

- Efficiency and accuracy of the app will be improved.

- Frequency of each emotion will be displayed in a form of bar or line graph.

- The conversational flow of the chat/journal portion will be improved by making it display the specific time and some notes that the user has.

If you love this app and are inspired by it, check out our list of hackathons for you to participate in. Register, code and create awesome solutions to real life problems and stand a chance to win awesome prizes while you’re at it!

Get advanced recruiting insights delivered every month

Related reads

The complete guide to hiring a Full-Stack Developer using HackerEarth Assessments

Fullstack development roles became prominent around the early to mid-2010s. This emergence was largely driven by several factors, including the rapid evolution of…

Best Interview Questions For Assessing Tech Culture Fit in 2024

Finding the right talent goes beyond technical skills and experience. Culture fit plays a crucial role in building successful teams and fostering long-term…

Best Hiring Platforms in 2024: Guide for All Recruiters

Looking to onboard a recruiting platform for your hiring needs/ This in-depth guide will teach you how to compare and evaluate hiring platforms…

Best Assessment Software in 2024 for Tech Recruiting

Assessment software has come a long way from its humble beginnings. In education, these tools are breaking down geographical barriers, enabling remote testing…

Top Video Interview Softwares for Tech and Non-Tech Recruiting in 2024: A Comprehensive Review

With a globalized workforce and the rise of remote work models, video interviews enable efficient and flexible candidate screening and evaluation. Video interviews…

8 Top Tech Skills to Hire For in 2024

Hiring is hard — no doubt. Identifying the top technical skills that you should hire for is even harder. But we’ve got your…